Research

Our goal is to build technologies to help humans by understanding and utilizing the essential "What Is a Human Being?" starting from the sense of touch. To this end, we aim to create theories and technologies necessary for a society in which humans, machines, and AI coexist, based on knowledge and technologies of robotics and virtual reality.

Robotics

Hybrid tactile and force transmission: Remote transmission of material perception via avatar robot

We constructed a system that transmits the material perception to the operator by transmitting force and vibrotactile information through an avatar robot.

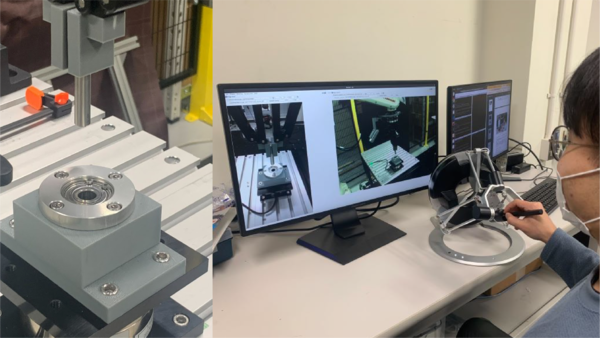

Remote assembly by industrial robot supported by tactile transmission

We developed a teleoperation support system for remote assembly by industrial robots. The workability was improved by transmitting force information.

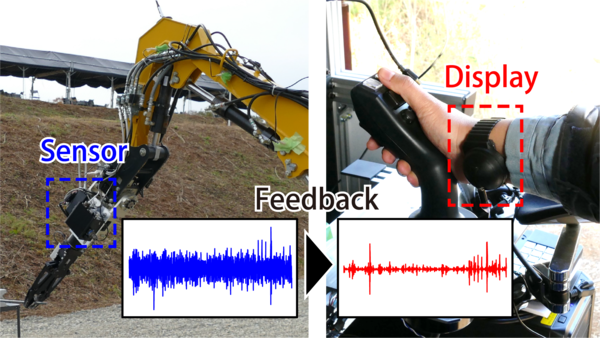

Emphasized transmission of vibrotactile information: Application to robot teleoperation support

We developed a system that converts and transmits raw vibrotactile information into information that is easily perceived by humans. Application of this system to teleoperation of construction machine improved workability.

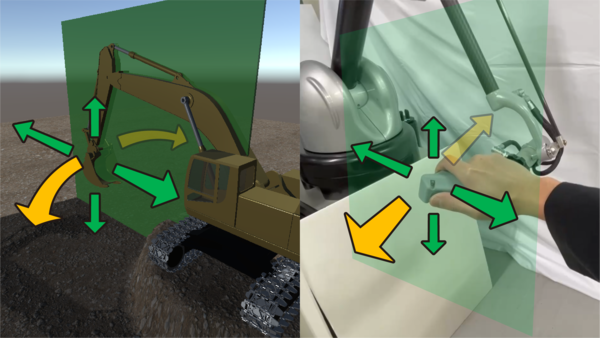

Hybrid control interface: Integration of appropriate operation methods for each subtask

We experimentally investigated appropriate operation methods for each subtask, such as digging and turning, and integrated the results to construct a control interface that achieves high operability and low burden.

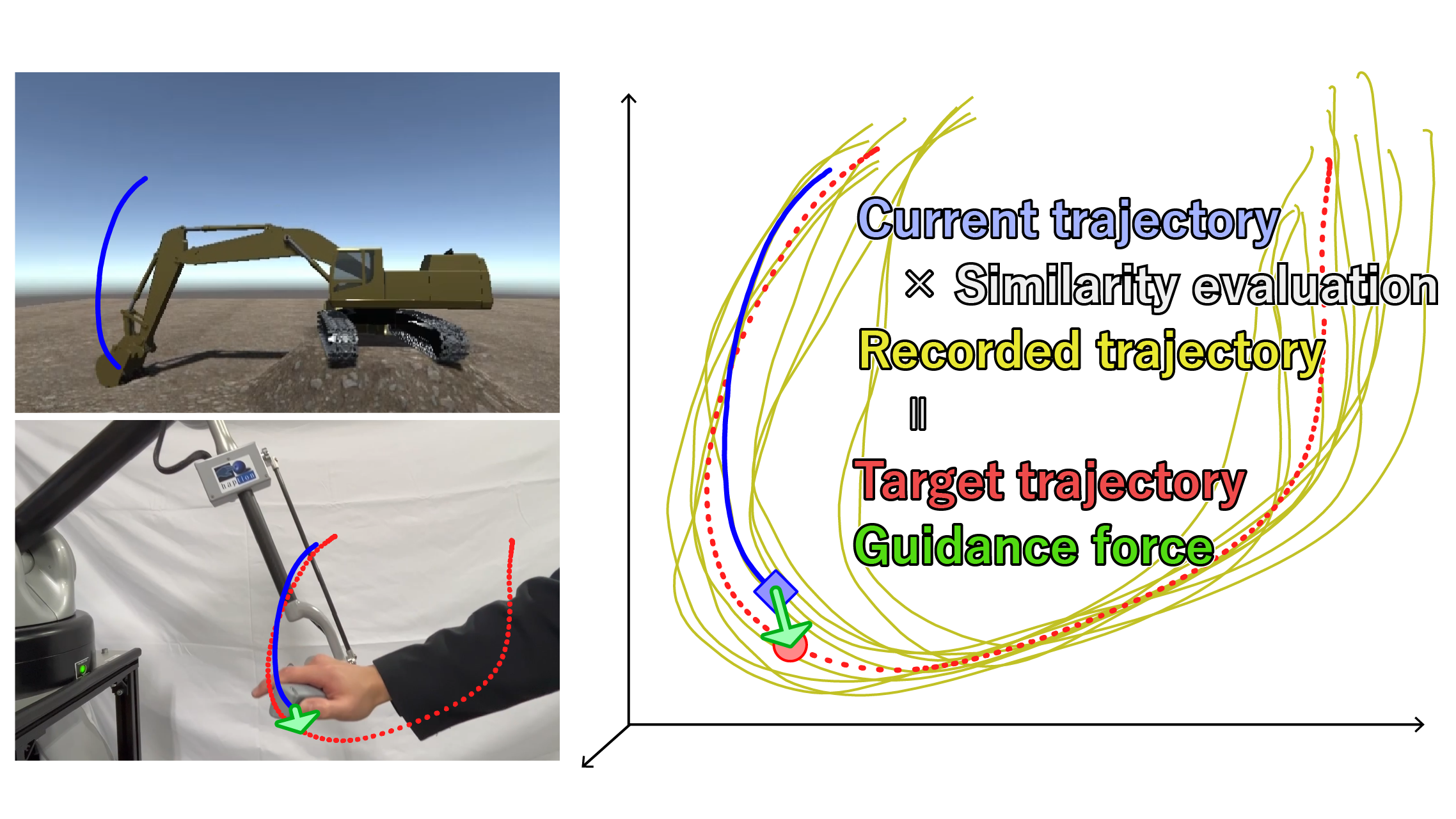

Force assist based machine learning for teleoperation of construction machine

We developed a teleoperation support system that reduces the physical and mental burden on the operator by providing force-feedback to follow the target trajectory based on prior learning of the operation trajectory and real-time evaluation of trajectory similarity.

Investigation of interface mechanisms to improve operability of construction machine

We experimentally compared the mechanisms of operation interfaces that enable intuitive operation input and low physical and mental strain.

Virtual Reality

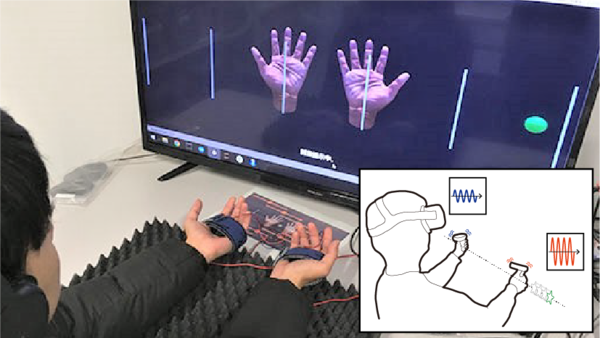

Remote handshake system with pressure distribution reproduction: Dynamic reproduction of interactive pressure distribution associated with handshake

To support telecommunication, we constructed a system that models the pressure distribution on the palm that occurs interactively with a handshake and reproduces it via a novel tactile display.

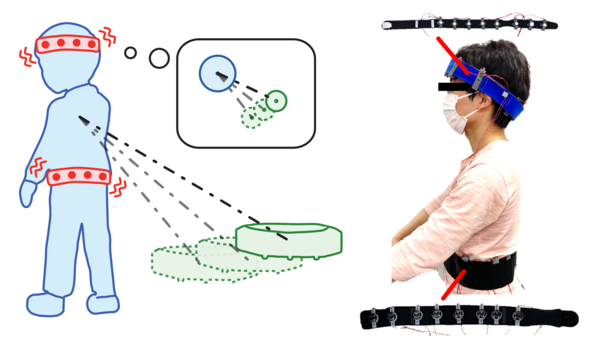

3D positional information presentation with multiple vibration stimuli

We constructed a system that enables humans to perceive 3D positional information of surrounding objects using vibration stimuli. And, we constructed a model that dynamically controls multiple vibration stimuli according to the target position.

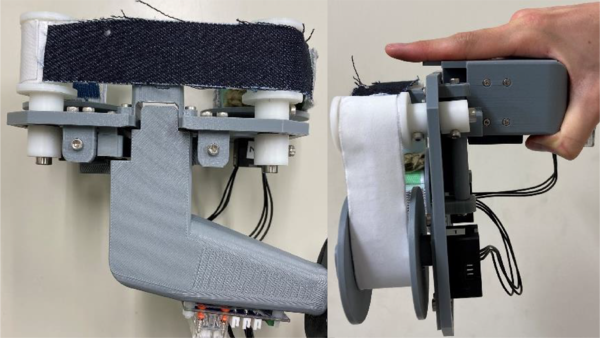

Material rotating tactile display for presenting various material sensations in VR

We constructed a system that presents the sensation of touching various materials in VR by dynamically controlling the winding and feeding of various materials in conjunction with VR.

Extended phantom sensation: Vibrotactile-based movement sensation in the area outside the inter-stimulus

We extended the tactile illusion phenomenon called "phantom sensation" and constructed an algorithm to present the sensation of objects moving around the user.

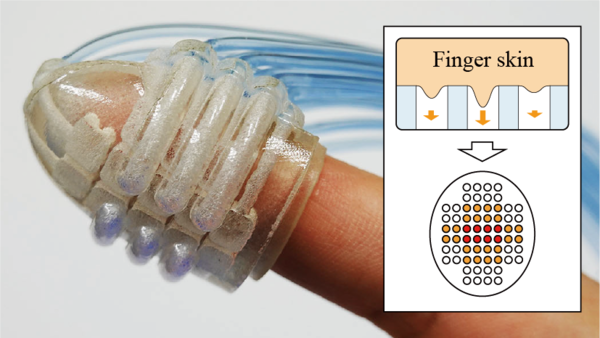

Wearable suction haptic display with spatiotemporal stimulus distribution on a finger pad

We constructed a wearable tactile display that presents distributed information on fingertip skin by spatio-temporally controlling suction pressure from a number of suction ports.

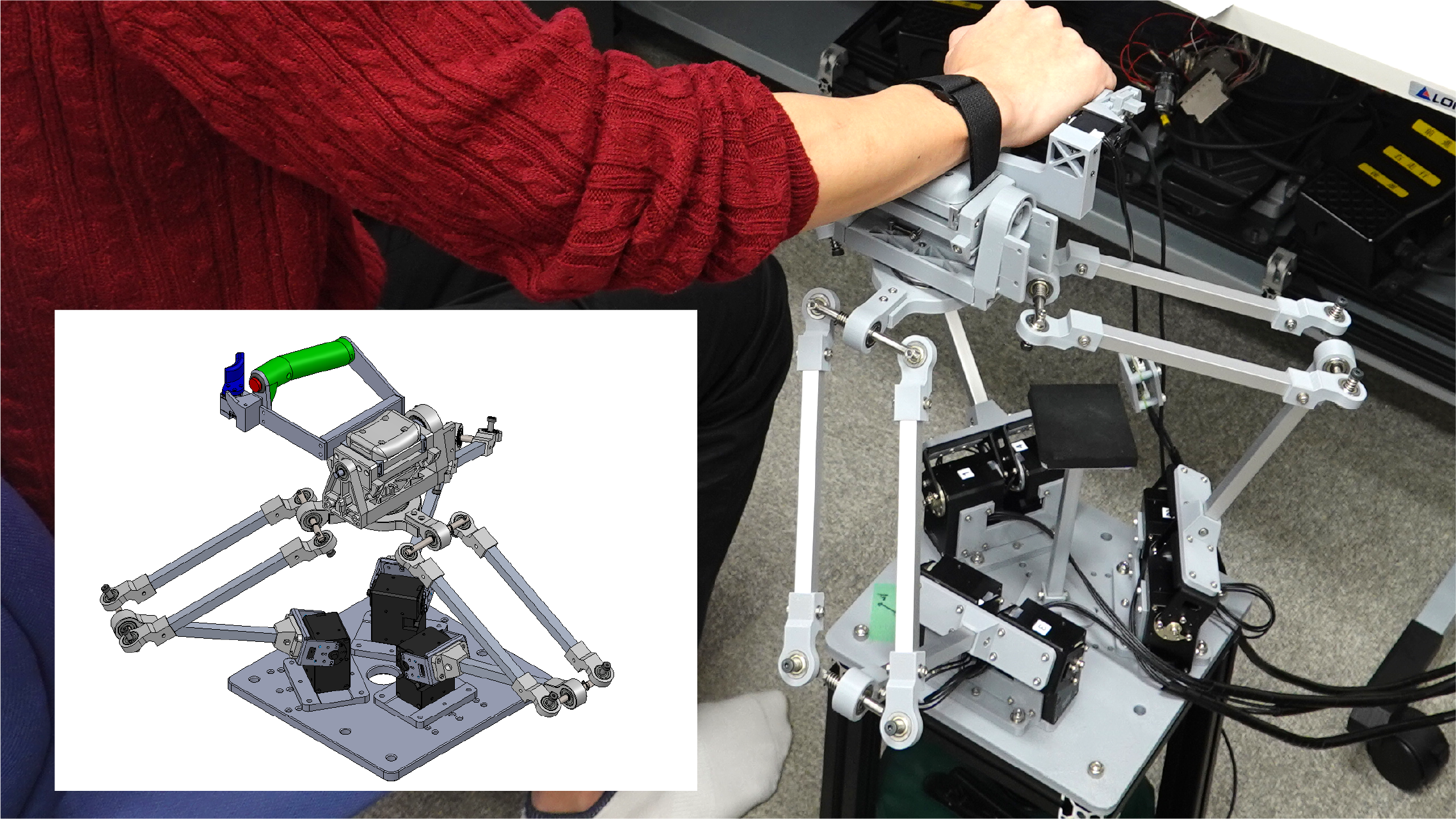

6-DOF fingertip skin deformation display using parallel link mechanism

We constructed a tactile display that controls the position/orientation of the fingertip contact surface in 6-DOF using a parallel link mechanism. It is possible to reproduce various object shapes and forces/torques applied to the fingertip.

Human Perception

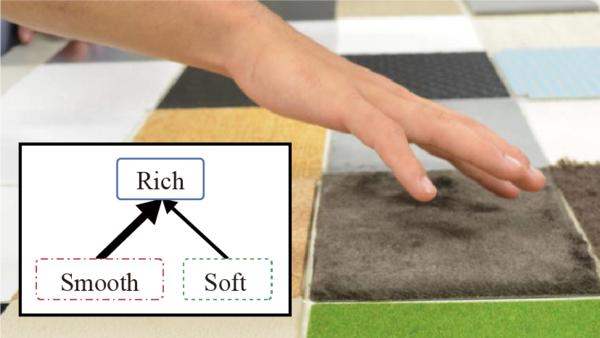

Multi-dimensional, multi-hierarchical model to represent human tactile diversity

The tactile sensations perceived by humans are diverse and sometimes include individual differences. We constructed a multidimensional and multilevel mathematical model to represent such diversity.

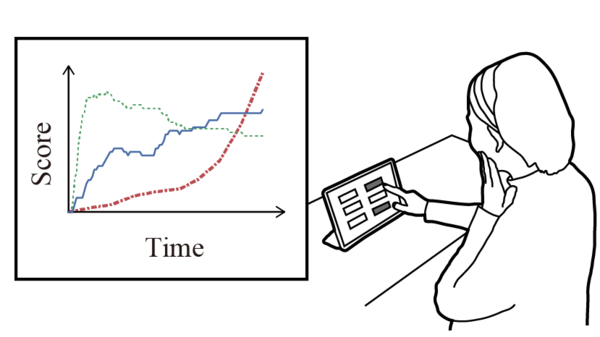

Time-series model of tactile sensation: Analysis of individual differences over time

Since tactile sensation is perceived by touching an object, it is a sensation that includes changes over time. We constructed a model to capture such a time series explicitly and to make it usable for engineering purposes.

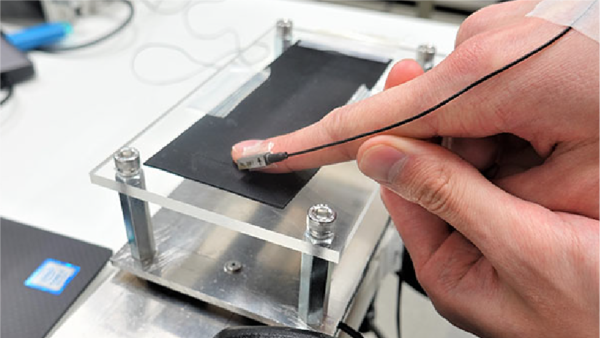

Tactile evaluation based on multiple simultaneous measurements of skin conditions

We performed simultaneous measurements of various factors that contribute to tactile sensation, such as skin vibration, skin deformation, and contact force.